AI ECD

2024

Enhancing early childhood development through AI-powered evaluations

I joined AI ECD (now KidooAI) at the pre-seed stage as a lead designer responsible for bringing their MVP to life. With no existing design foundation, I led the end-to-end design of their dashboard, AI-assisted evaluation, and evaluation report — navigating the unique challenge of building an experience that had to work for two very different users: parents seeking clarity, and children as young as three taking an assessment.

Role

Lead Product Designer

Team

3 Product Designers

2 Developers

1 Product Manager

Timeline

May – September 2024

Tool

Figma

Context

AI ECD is a startup using generative AI to bring scientifically-based early childhood development evaluations directly to families. Their platform combines advanced AI technology with established developmental research to assess children across key learning domains. The result is a reliable, accessible tool that gives parents meaningful insight into their child's growth and the personalized guidance to support it.

Problem

AI ECD's founder spent years researching early childhood development at Stanford and consistently saw the same problem: parents who wanted to support their child's growth but couldn't access the tools to do it. Formal developmental assessments are expensive, hard to interpret, and often out of reach for the average family. Our team asked ourselves:

How might we give parents the clarity and confidence of a professional developmental assessment without the cost, complexity, or need for a clinic?

Solution

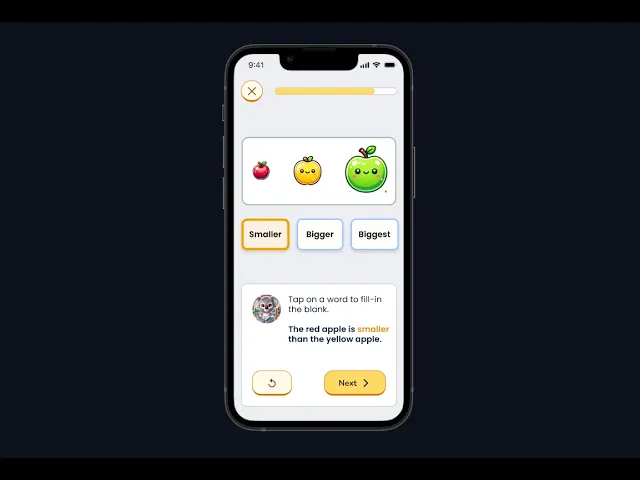

To address this, we designed an AI-powered Developmental Evaluation, a game-based assessment that engages children through interactive tasks while measuring their progress across key developmental domains.

The evaluation results serve as the foundation for the rest of the app, informing personalized reports, activity recommendations, and guidance tailored to each child.